Agenta vs Fallom

Side-by-side comparison to help you choose the right tool.

Agenta centralizes LLM development, enhancing collaboration and reliability through structured workflows and systematic.

Last updated: March 1, 2026

Fallom provides real-time observability and cost tracking for your LLM applications.

Last updated: February 28, 2026

Visual Comparison

Agenta

Fallom

Feature Comparison

Agenta

Centralized Prompt Management

Agenta centralizes prompt storage, evaluation, and tracing within a single platform. This feature eliminates the chaos of scattered prompts across various tools, enabling teams to manage their prompts efficiently and maintain a clear overview of their work.

Automated Evaluation

With Agenta's automated evaluation capabilities, teams can create systematic processes to run experiments, track results, and validate every change made to their prompts. This feature reduces guesswork and increases confidence in the development process.

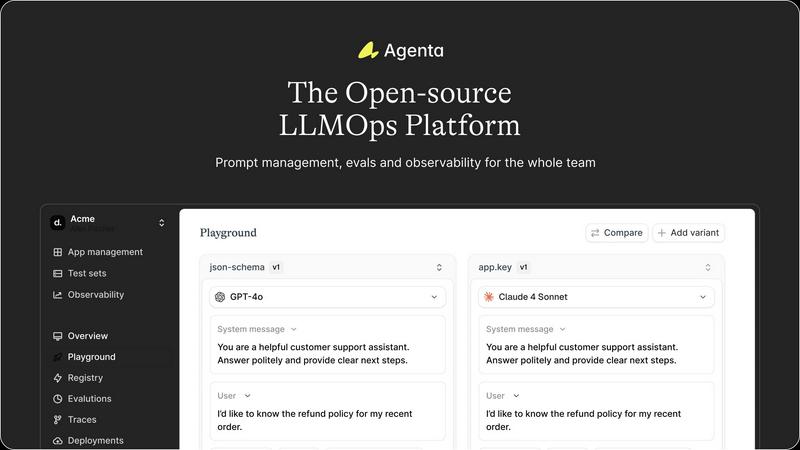

Unified Playground

Agenta provides a unified playground where teams can compare prompts and models side-by-side. This feature also allows users to save errors found in production to a test set, facilitating a seamless transition to testing and debugging.

Collaborative Workflow

The platform encourages collaboration among product managers, developers, and domain experts, allowing them to experiment, compare results, and debug prompts together. This feature enhances communication and ensures that all stakeholders are involved in the development process.

Fallom

End-to-End LLM Tracing

Fallom provides complete, OpenTelemetry-native tracing for every LLM call and agent action. This goes beyond simple logging to deliver a visual, interconnected map of your AI workflows. You can see the exact sequence of events, from the initial user prompt through intermediate tool calls and reasoning steps to the final response. This granular visibility is essential for debugging complex issues, understanding the "why" behind an agent's behavior, and optimizing the entire chain for performance and cost-efficiency.

Real-Time Cost Attribution & Analytics

Gain precise financial control over your AI spend with Fallom's detailed cost attribution engine. The platform automatically breaks down expenses by model, individual API call, user, team, or even specific customer sessions. This transparency is crucial for teams progressing from project-based budgets to company-wide AI rollouts, enabling accurate chargebacks, forecasting, and identifying optimization opportunities to ensure your AI investment delivers maximum return.

Compliance-Ready Audit Trails

Built for regulated industries, Fallom ensures your AI operations evolve without compliance risk. It maintains immutable, detailed audit logs of every interaction, including full input/output logging, model versioning, and user consent tracking. These features are foundational for adhering to frameworks like the EU AI Act, GDPR, and SOC 2, providing the evidence and control needed to scale AI responsibly and with full accountability.

Advanced Debugging with Tool & Session Context

Debugging agents requires understanding context. Fallom groups related traces into user or customer sessions, providing a holistic view of interactions over time. Furthermore, it offers deep visibility into every tool and function your agents call, displaying arguments and results in detail. This combination of session-level context and tool call visibility turns debugging from a frustrating hunt into a streamlined, efficient process.

Use Cases

Agenta

Streamlining AI Development

Agenta is ideal for AI development teams looking to streamline their workflows. By centralizing prompts and evaluation processes, teams can significantly reduce the time spent managing scattered resources and enhance collaboration.

Enhancing Debugging Processes

When debugging AI applications, Agenta provides tools to trace every request and pinpoint failure points effectively. This capability turns guesswork into evidence-based debugging, facilitating quicker resolutions to issues.

Facilitating Experimentation

Teams can leverage Agenta to conduct experiments efficiently by utilizing the unified playground for testing and comparing different prompts. This feature is crucial for rapid iteration and improvement of LLM applications.

Empowering Domain Experts

Agenta allows domain experts to safely edit and experiment with prompts without needing coding skills. This functionality empowers subject matter experts to contribute directly to the development process, enriching the overall quality of the AI applications.

Fallom

Scaling Enterprise AI Agent Deployments

For enterprises transitioning AI agents from pilot programs to core business operations, Fallom provides the operational backbone. It allows platform teams to monitor the health, performance, and cost of hundreds of concurrent agent workflows, ensuring reliability for end-users and providing the data needed to justify further investment and expansion of AI capabilities across the organization.

Optimizing Cost and Performance of LLM Workloads

Development teams use Fallom to move from a "set and forget" model deployment to a continuous optimization cycle. By analyzing latency waterfalls, token usage patterns, and cost-per-call data, engineers can experiment with different models, prompt structures, and architectures. This data-driven approach leads to faster, cheaper, and more reliable AI features, directly improving the product's bottom line and user experience.

Ensuring Regulatory Compliance for AI Applications

Companies in finance, healthcare, or legal services use Fallom to build and audit compliant AI applications. The platform's detailed audit trails, consent tracking, and privacy controls provide the necessary documentation for internal reviews and external regulators. This enables these companies to innovate with AI while systematically managing risk and upholding their legal and ethical obligations.

Improving Customer Support with AI Analytics

Product and customer success teams leverage Fallom's session tracking and customer analytics to understand how users interact with AI features. They can identify power users, spot common failure points in conversations, and attribute support costs to specific clients. These insights guide product improvements, training data collection, and customer-specific model fine-tuning, evolving the AI from a generic tool to a tailored asset.

Overview

About Agenta

Agenta is an innovative open-source LLMOps platform designed to empower AI teams in developing and deploying reliable large language model (LLM) applications. The platform addresses the unpredictability inherent in LLMs by fostering collaboration between developers and subject matter experts, creating a structured environment for effective teamwork. With Agenta, teams can streamline the entire workflow of prompt management, evaluation, and observability, enabling them to experiment efficiently and validate their work with confidence. By centralizing LLM development processes, Agenta eliminates the chaos of scattered prompts and siloed efforts, allowing teams to iterate quickly and enhance performance through real-time feedback. Ultimately, Agenta serves as the single source of truth for LLM development, fostering collaboration and enhancing productivity across diverse teams, making it an invaluable tool for AI professionals aiming to build reliable applications.

About Fallom

Fallom represents the next evolutionary stage in AI operations, an observability platform built from the ground up for the age of intelligent agents. It is designed for AI developers and enterprise teams who have moved beyond initial experimentation and are now scaling complex LLM and agent workloads in production. As these systems grow from simple prompts to intricate, multi-step workflows involving tools, databases, and conditional logic, traditional monitoring tools fall short. Fallom fills this critical gap by providing a comprehensive, real-time window into every LLM interaction. It captures the full spectrum of data—prompts, outputs, tool calls, token usage, latency, and costs—transforming opaque AI operations into a transparent, manageable, and optimizable system. Its core value proposition is enabling businesses to progress from merely deploying AI to mastering it, ensuring reliability, controlling spend, and maintaining compliance as their AI initiatives mature and evolve.

Frequently Asked Questions

Agenta FAQ

What is LLMOps?

LLMOps, or Large Language Model Operations, refers to the practices and tools used to manage the lifecycle of large language models, including development, deployment, and monitoring of AI applications.

How does Agenta improve collaboration?

Agenta enhances collaboration by providing a centralized platform where product managers, developers, and domain experts can work together, experiment, and share insights, breaking down silos that often hinder productivity.

Can Agenta integrate with other tools?

Yes, Agenta seamlessly integrates with popular frameworks and models such as LangChain, LlamaIndex, and OpenAI, ensuring you can use the best tools without vendor lock-in.

Is Agenta suitable for beginners?

Absolutely. Agenta is designed to be user-friendly, with features that allow even those without programming skills, such as domain experts, to contribute to prompt management and experimentation effectively.

Fallom FAQ

How quickly can I integrate Fallom into my existing application?

Integration is designed for rapid progression from setup to insight. With its single, OpenTelemetry-native SDK, you can typically instrument your LLM calls and start seeing traces in your Fallom dashboard in under five minutes. The platform works alongside your existing code, requiring minimal changes to begin collecting comprehensive observability data.

Does Fallom support all major LLM providers?

Yes, Fallom is built on open standards to prevent vendor lock-in and support your AI evolution. It is compatible with all major LLM providers, including OpenAI, Anthropic, Google Gemini, and open-source models. This means you can use a unified observability platform regardless of how your model strategy changes or expands over time.

How does Fallom handle sensitive or private user data?

Fallom includes enterprise-grade privacy controls for regulated environments. You can enable Privacy Mode, which allows you to capture full telemetry and trace data while redacting or disabling the logging of actual prompt and response content. This lets you maintain operational visibility and compliance auditing without storing sensitive information.

Can I use Fallom for testing and evaluating my LLM prompts?

Absolutely. Fallom includes features for running evaluations on LLM outputs, allowing you to track metrics like accuracy, relevance, and hallucination rates. Coupled with its Prompt Store for version control and A/B testing, it creates a robust framework for continuously improving your prompts and catching regressions before they impact production users.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform that supports AI teams in developing and deploying reliable large language model applications. As organizations increasingly adopt AI technologies, users often seek alternatives to Agenta due to various factors, including pricing, specific feature sets, and compatibility with existing platforms. The need for tailored solutions that align with a team's unique workflow and project requirements can drive the search for different options. When choosing an alternative to Agenta, it's essential to consider several key aspects. Evaluate the platform's ability to centralize workflow management, the robustness of its collaboration features, and the comprehensiveness of its observability tools. Additionally, understanding the support and community around the platform can significantly impact the efficiency and effectiveness of your LLM development process.

Fallom Alternatives

Fallom is an AI-native observability platform in the development and monitoring category. It provides real-time tracking, debugging, and cost transparency for large language model and AI agent workloads, helping teams optimize performance and ensure compliance. Users often explore alternatives for various reasons. These can include budget constraints, the need for a different feature set, or specific platform integration requirements that better align with their existing tech stack and operational maturity. When evaluating an alternative, consider your current and future needs. Key factors include the depth of observability for LLM calls, the clarity of cost attribution across teams, built-in compliance features for audit trails, and the ease of implementation with your current development workflow.