Crawlkit

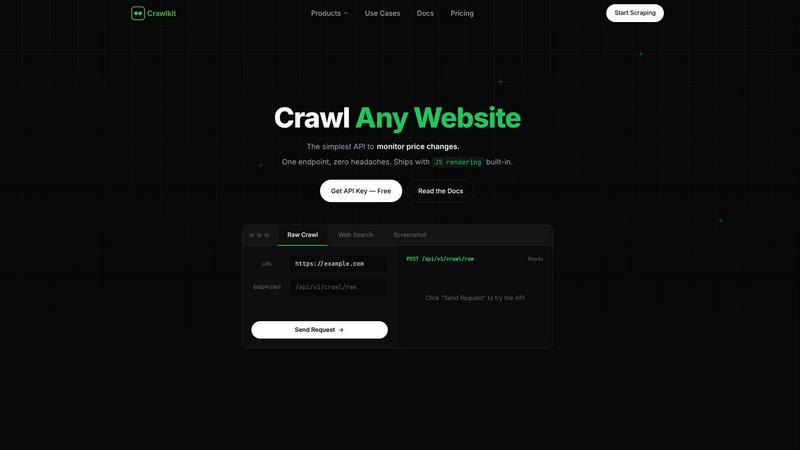

CrawlKit evolves your data extraction from complex scraping into a single, simple API for any website.

About Crawlkit

Crawlkit represents a significant evolution in web data extraction, moving beyond the fragile, DIY scraping scripts of the past. It is a comprehensive API platform engineered for developers and data teams who are ready to scale their data operations without the operational burden. The journey from managing proxies, headless browsers, and constant anti-bot breakages to a simple, reliable API call is the core progression Crawlkit enables. It serves teams building data pipelines, competitive intelligence tools, market research platforms, and CRM enrichment systems, providing them with dependable, structured data from the most challenging sources. Its main value proposition is turning the entire web into a clean, queryable API. By abstracting away the immense complexity of modern web scraping—including proxy rotation, JavaScript rendering, CAPTCHA solving, and adaptive retry logic—Crawlkit allows professionals to focus entirely on the growth stage of their projects: analyzing data, gaining insights, and building products, rather than getting stuck in the maintenance phase of data collection infrastructure.

Features

Unified API for Diverse Sources

Crawlkit provides a single, consistent API endpoint to extract structured data from a vast array of platforms. Whether you need professional profiles from LinkedIn, social metrics from Instagram, app reviews from the Play Store, or parsed search results from Google, the interface remains the same. This eliminates the need to learn and integrate multiple specialized scrapers, streamlining your development process and accelerating your project's evolution from prototype to production.

Built-In Infrastructure Management

The platform autonomously handles the entire technical stack required for reliable scraping. This includes intelligent proxy rotation to avoid IP bans, full JavaScript rendering with headless browsers to load dynamic content, sophisticated logic to bypass anti-bot protections, and automatic retries for failed requests. This feature allows your team to progress from worrying about infrastructure stability to confidently scaling your data collection, knowing Crawlkit manages the hard parts.

Guaranteed Structured Data Output

Crawlkit doesn't just return raw HTML; it delivers parsed, clean, and structured JSON data. For every supported platform, the API provides data in a predictable schema, such as follower counts, employee lists, job titles, or review ratings. This ensures you spend zero time on data parsing and cleaning, allowing you to immediately progress to the analysis and integration stage of your workflow, building more robust and reliable applications faster.

Transparent, Credit-Based Pricing

With a simple pay-as-you-go credit system, Crawlkit offers full cost predictability and control. Each API call consumes a known number of credits, and credits never expire. There are no monthly commitments, hidden fees, or surprise overage charges. This pricing model supports the natural growth of your project, from initial experimentation with the free tier to scaled production use, with volume discounts that reward increased usage.

Use Cases

CRM and Lead Enrichment

Automatically enrich contact records in your CRM with high-quality data from professional networks. By pulling structured data from LinkedIn profiles, you can append job titles, current company information, skills, and experience to your leads. This progression from basic contact lists to rich, actionable prospect profiles empowers sales teams to personalize outreach and improve conversion rates significantly.

Competitive Intelligence and Market Research

Track competitors' digital footprints systematically. Monitor changes in their team on LinkedIn, analyze their app store reviews for user sentiment, or track their Instagram engagement metrics over time. Crawlkit enables the evolution from sporadic, manual checks to automated, real-time competitive dashboards, providing the data needed for strategic decision-making.

App Store Optimization (ASO) Analysis

Gather and analyze reviews and details from both the Google Play Store and Apple App Store for your own or competing applications. Extract ratings, review text, version history, and feature lists to understand user feedback, identify pain points, and track feature adoption. This data-driven approach progresses your ASO strategy from guesswork to a precise, iterative optimization process.

Real-Time Search Engine Monitoring

Execute web searches programmatically and receive clean, parsed results. Filter by language, region, or date to monitor brand mentions, track keyword rankings, or gather the latest news and publications in any industry. This use case evolves your market awareness from passive reading to an active, automated alerting and analysis system.

Pricing

Crawlkit uses a straightforward, credit-based pricing model. You start with 100 free credits. Paid plans offer volume discounts: for example, $24 provides 25,000 credits, with larger bundles available. Each API endpoint costs a fixed number of credits per call (e.g., 1 credit for an Instagram profile, 2 for a LinkedIn company page). Key principles include no monthly commitments, credits that never expire, and automatic refunds for failed requests. This transparent model ensures predictable costs as your usage scales.

Frequently Asked Questions

What platforms does Crawlkit currently support?

Crawlkit supports a growing list of major platforms, including LinkedIn for company and profile data, Instagram for public profile and post metrics, and both the Google Play Store and Apple App Store for app details and reviews. They also offer a generic web search endpoint. The platform is continually evolving, and they encourage users to request new sources.

How does Crawlkit handle websites with anti-bot measures?

Crawlkit's infrastructure is specifically designed to bypass common anti-bot protections. It uses a combination of residential proxy rotation, realistic browser fingerprinting, and adaptive request patterns that mimic human behavior. This sophisticated system is managed entirely by Crawlkit, freeing you from the constant battle against blocks and CAPTCHAs.

What happens if an API request fails?

Crawlkit operates on a "refund on failure" policy. If a request fails due to issues on Crawlkit's side (e.g., unable to retrieve data from the target site), the credits used for that request are automatically refunded to your account. This ensures you only pay for successful, complete data delivery.

Is there a free tier to try Crawlkit?

Yes, you can start completely free with 100 credits upon sign-up. This allows you to test several API calls across different endpoints to evaluate the quality of the data and the reliability of the service without any financial commitment. You can upgrade to a paid plan at any time to purchase more credits.

Similar to Crawlkit

Subiq

Subiq simplifies SaaS subscription management for small teams, tracking tools and expenses to eliminate wasted spend and forgotten renewals.

Toon Tone

Train your color perception daily by guessing original cartoon character hues using HSB sliders in five quick rounds.

FX Radar

FX Radar evolves your trading with real-time news and AI-driven logic, delivering market-moving headlines in seconds for smarter decisions.

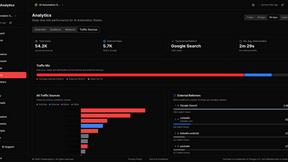

GhostlyX Privacy-First Web Analytics

GhostlyX delivers actionable web analytics insights while prioritizing user privacy through a lightweight, cookie-free platform.

Microplastic Intake App

Your journey to a cleaner life begins with tracking your microplastic intake through a research-backed assessment that evolves into personalized.

Webleadr

Webleadr empowers you to effortlessly find and contact businesses without websites, transforming leads into clients in just a few clicks.

TubeAnalytics

TubeAnalytics transforms raw YouTube data into actionable AI insights that scale your channel growth and revenue.

Metric Nexus

Metric Nexus unifies your marketing data so you can ask Claude questions in plain English for instant insights.